ProPR

Memory-aware AI pull request review across Azure DevOps, GitHub, GitLab, and Forgejo-family SCM providers with protocol-grade traceability, webhook automation, and flexible Azure OpenAI, OpenAI, and LiteLLM connections.

What's Included

A Closer Look

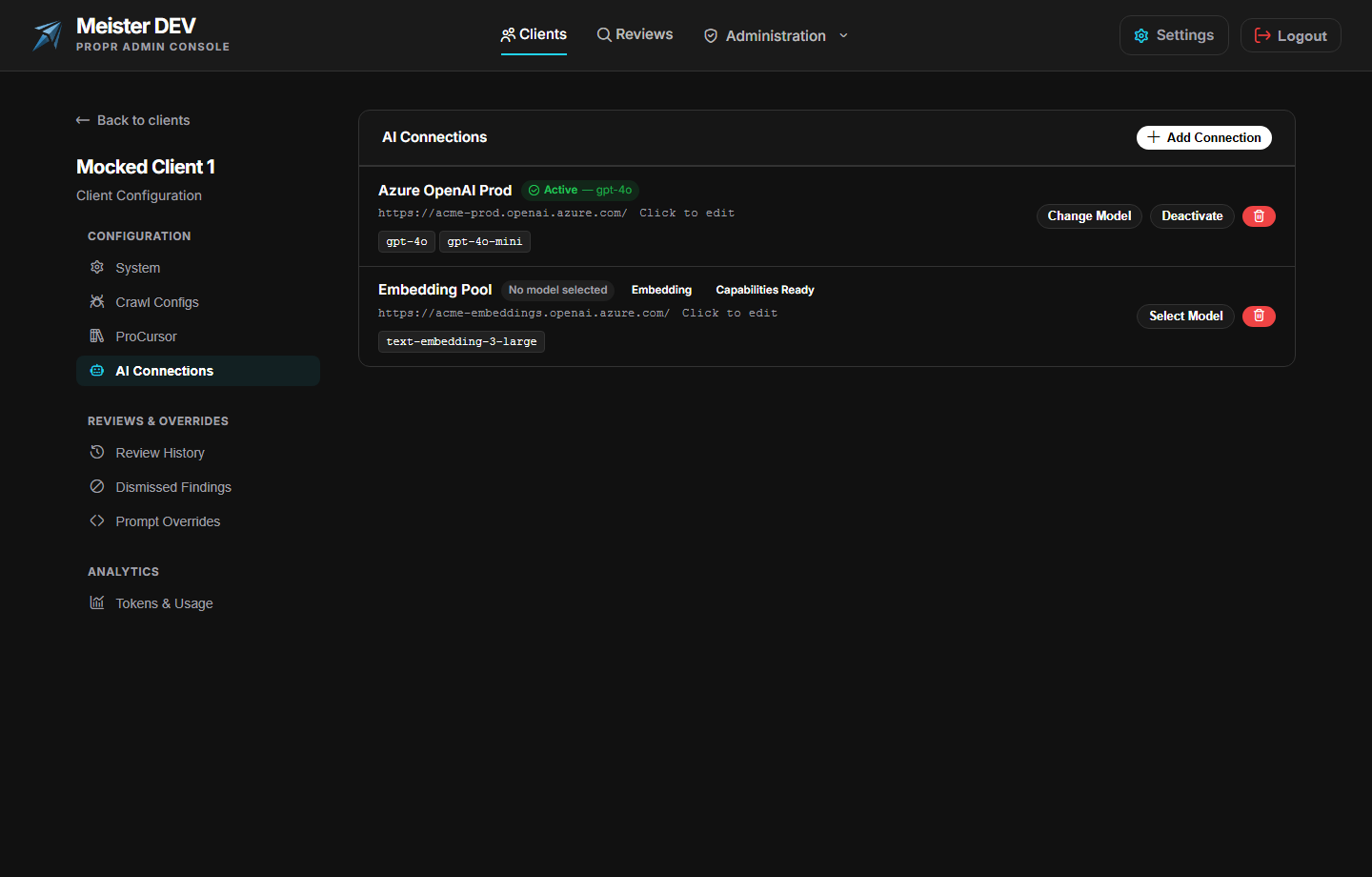

Choose AI Providers Per Client

Each client can configure Azure OpenAI, OpenAI, or LiteLLM profiles, verify connectivity, discover models, and bind different runtimes to review, memory reconsideration, and embedding workloads without redeploying the service.

Reviews That Remember

Resolved threads and admin dismissals become semantic memory records. When a pull request changes, ProPR can retrieve similar prior decisions, reconsider its findings, and avoid reposting the same noise.

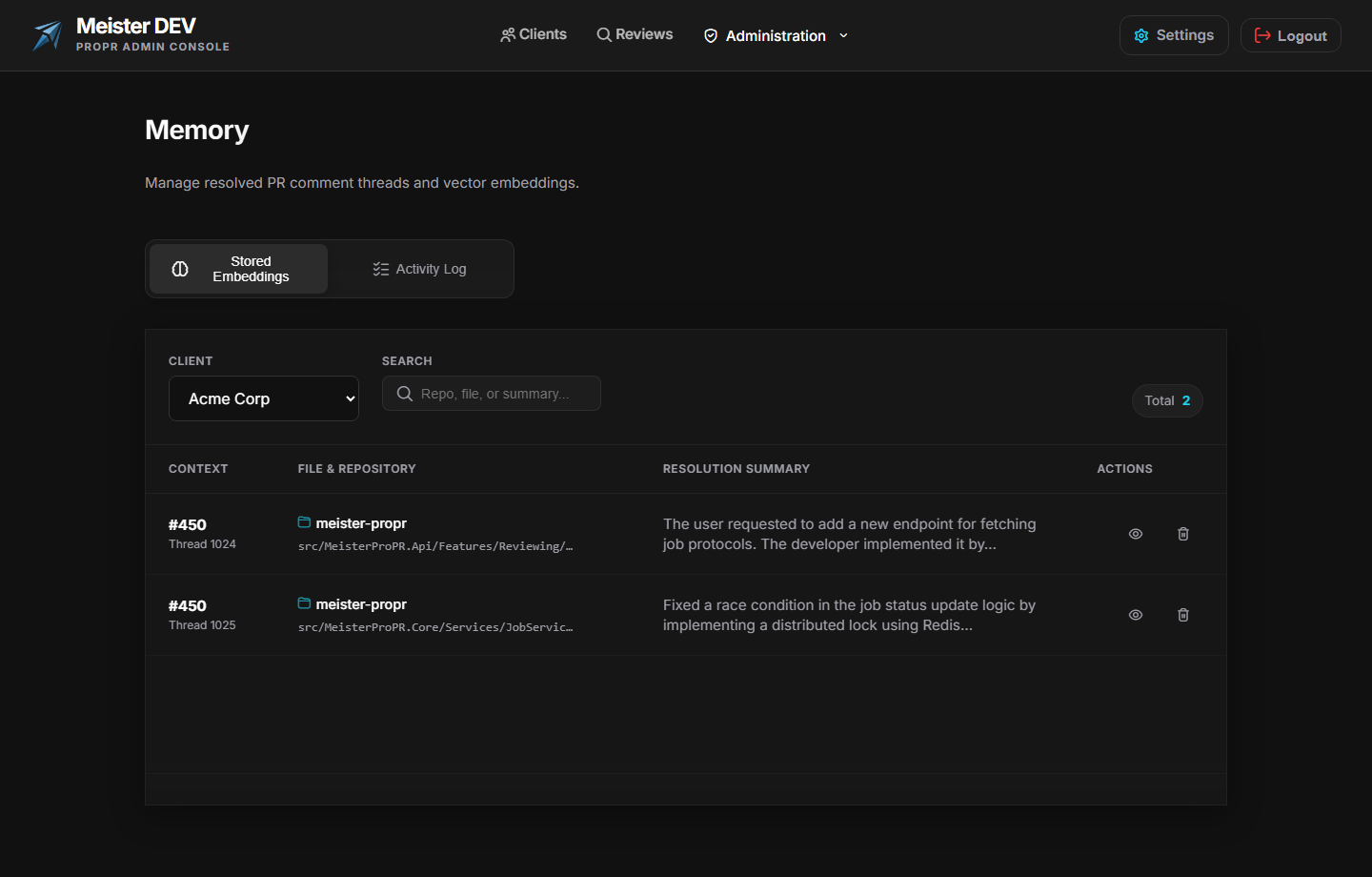

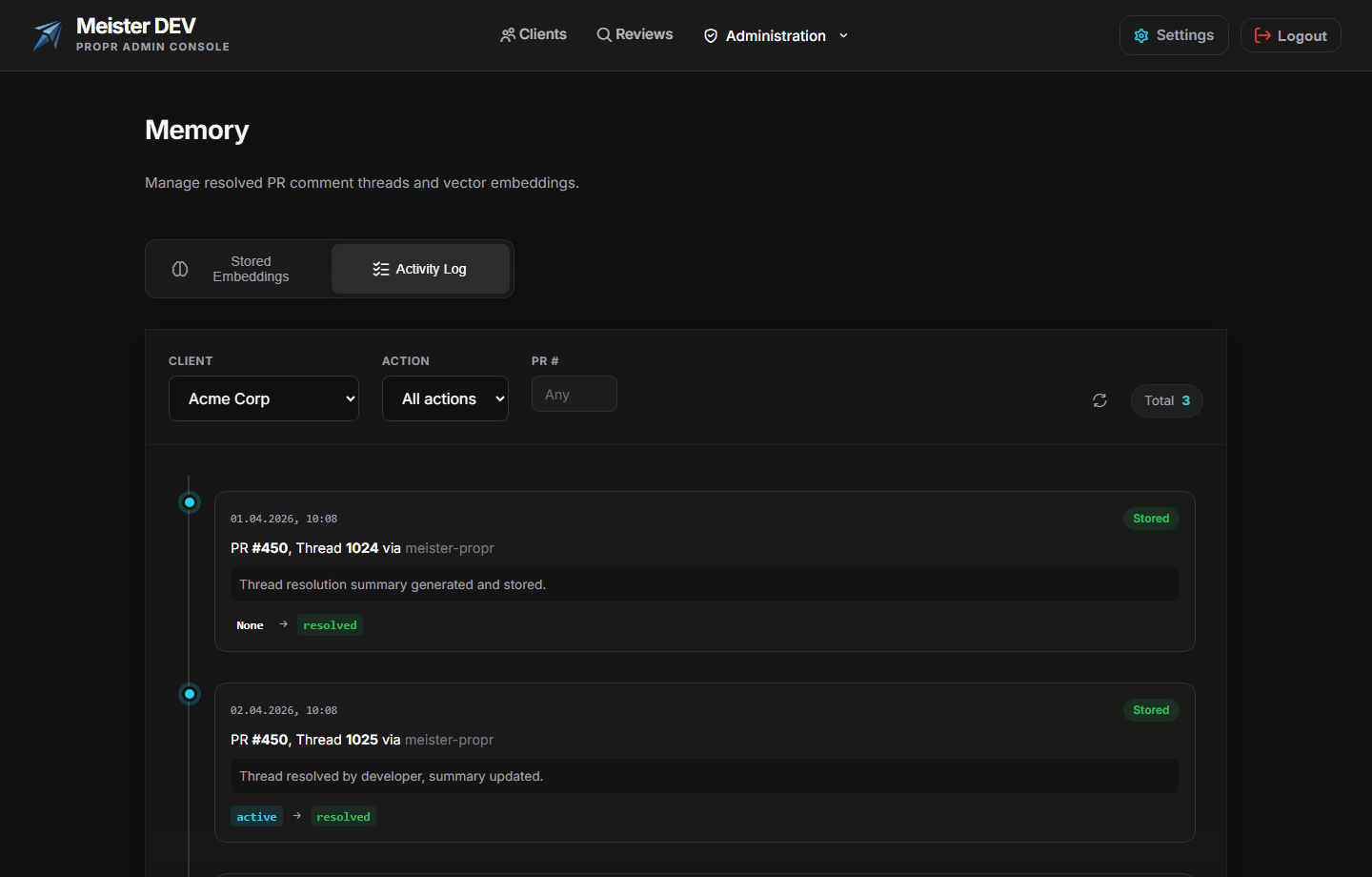

Audit The Memory Lifecycle

Thread Memory includes a searchable embedding store plus an activity log for stored, removed, and skipped records. Admins can see how memory evolves instead of treating it like a black box.

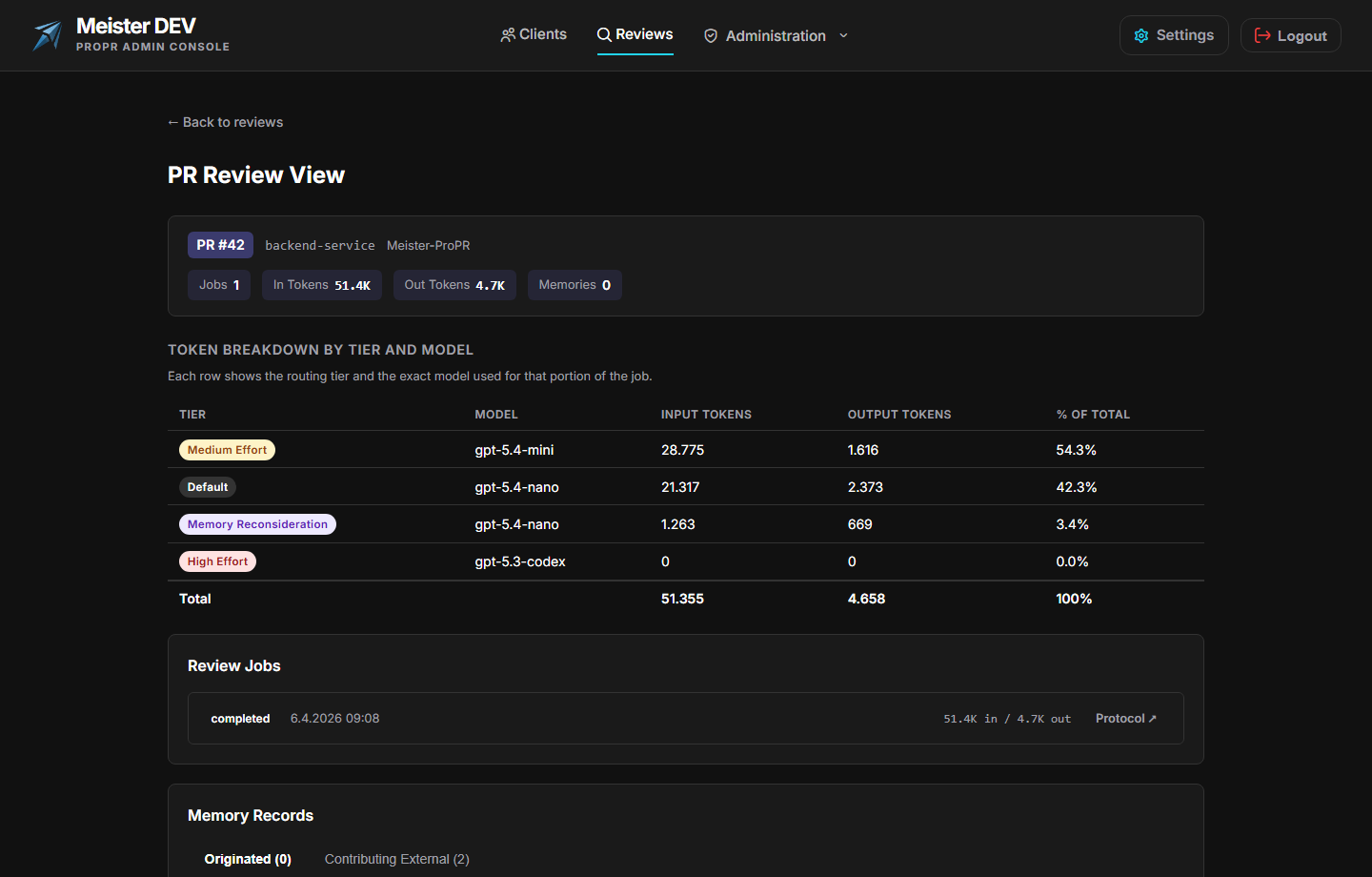

See The Whole Pull Request

The PR Review view aggregates every review job, token tier, and contributing memory record for a pull request, giving teams a complete operational story before they act on findings.

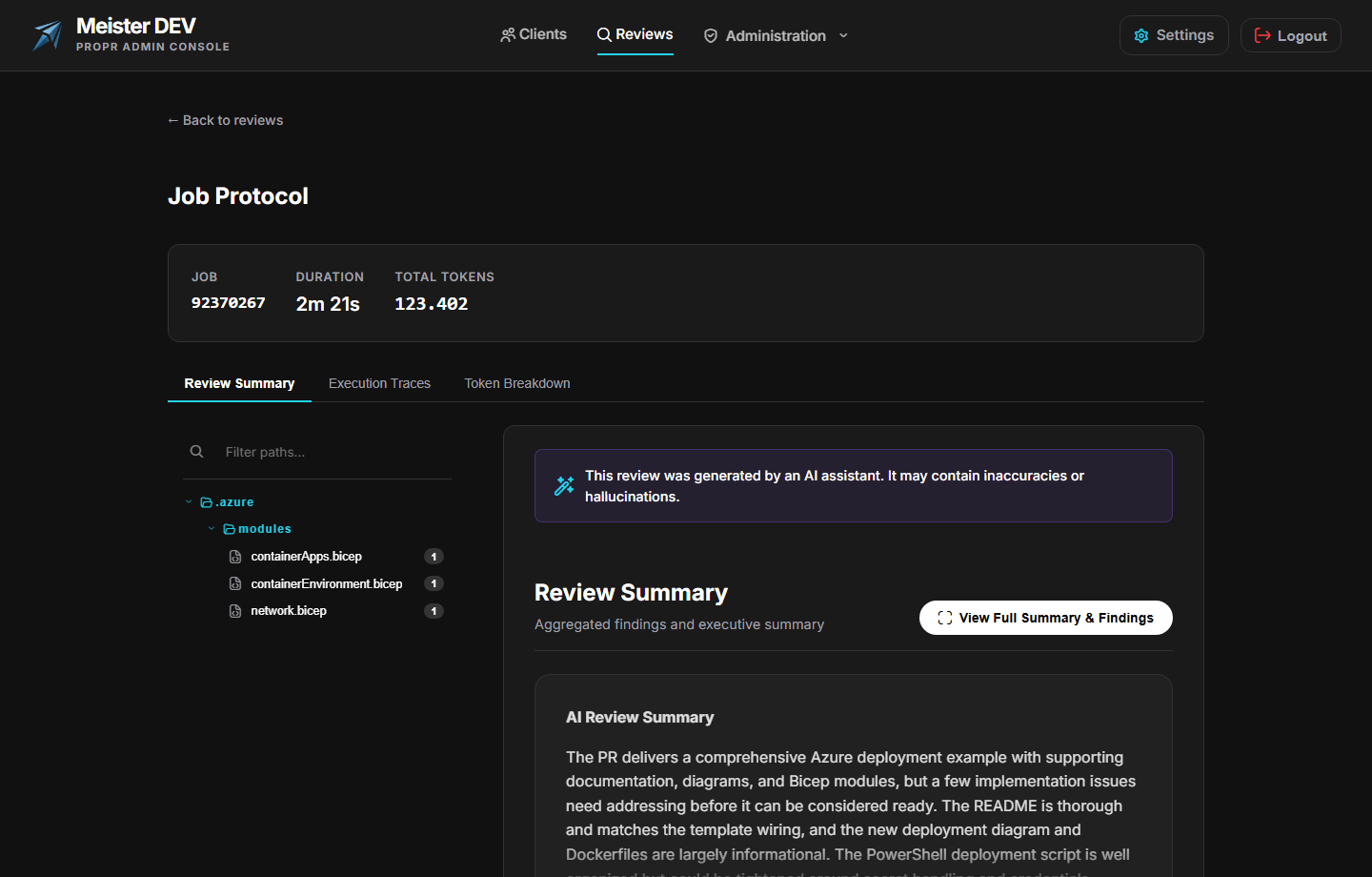

Inspect Each Review Pass

Job Protocols capture review summaries, findings, file-level context, and execution detail so teams can move from a pull-request rollup to the exact review pass behind it.

Track Token Usage Over Time

The Tokens & Usage dashboard makes reviewer traffic visible over time, so teams can watch input and output volume, compare effort tiers, and govern long-running rollouts with real numbers.

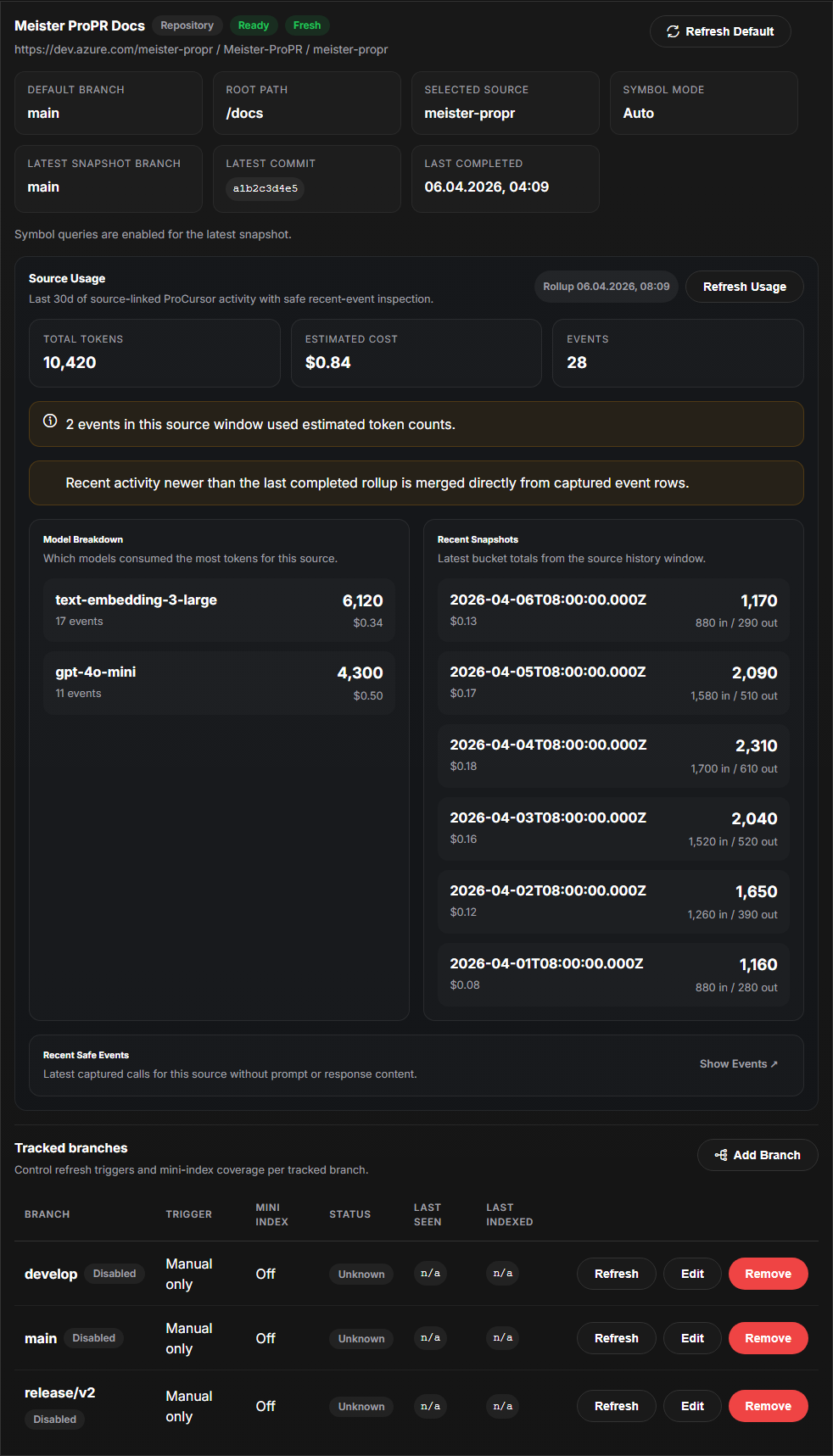

Govern ProCursor Sources

Register repository and wiki knowledge sources, verify freshness, inspect model usage per source, and jump from source rollups into safe event inspection without exposing prompt payloads.

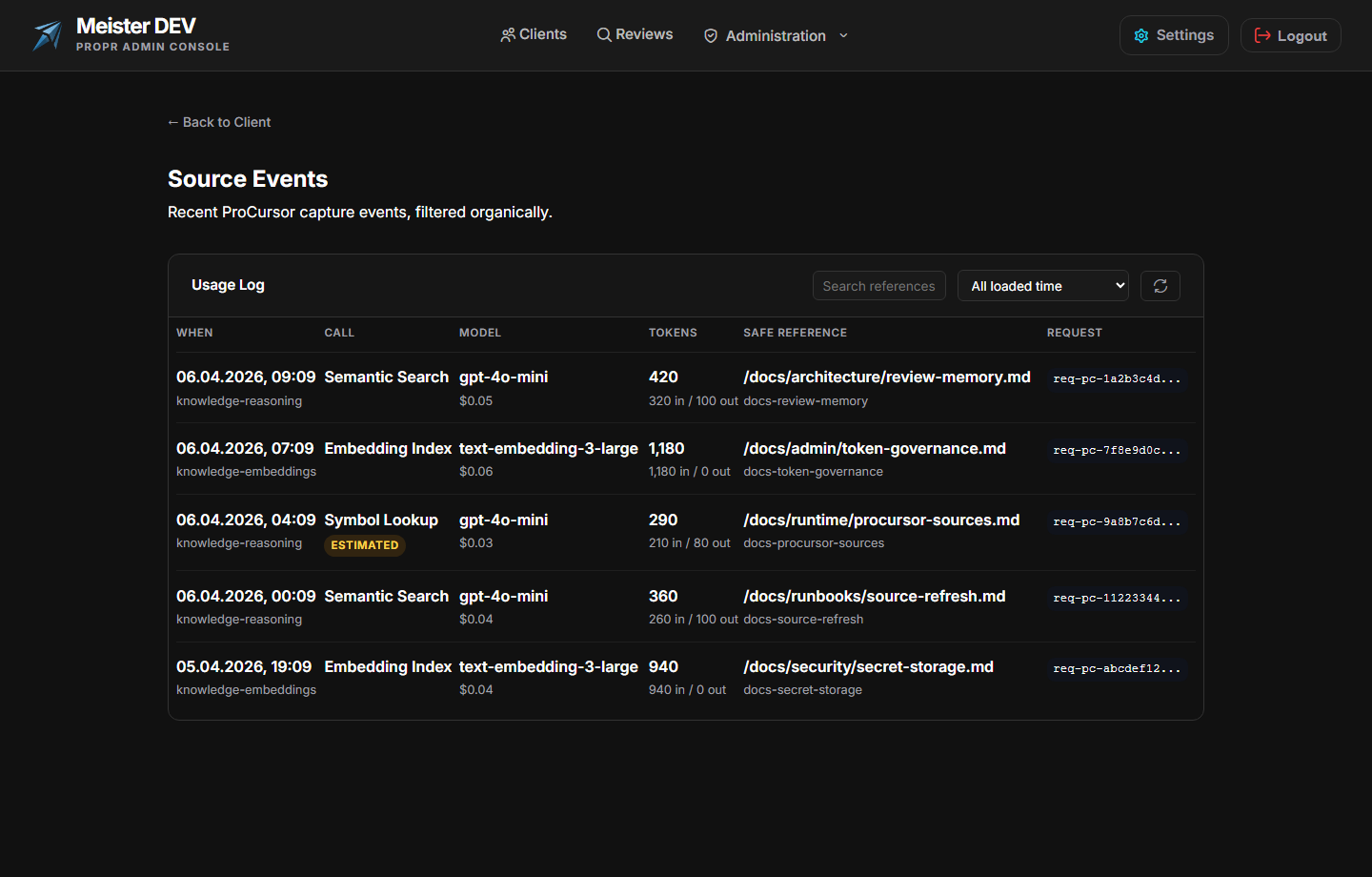

Audit ProCursor Source Events

Source Events gives administrators a safe reference log of indexing and retrieval calls, including timestamps, model usage, token counts, and document identifiers tied to a knowledge source.

How ProPR Works

ProPR is a self-hosted ASP.NET Core service that connects to Azure DevOps, GitHub, GitLab, and Forgejo-family providers, monitors pull requests and merge requests through background discovery or webhook intake, and posts deep AI reviews as threaded comments where your team already works.

It reviews changed files with cross-file awareness, records what happened during every pass, and reuses semantic memory from past review decisions to keep future reviews grounded in your team’s actual outcomes. Each client can now also bind different AI providers and models to review, memory, and embedding workloads instead of forcing one shared runtime choice.

Your source code never leaves your infrastructure. ProPR runs as a Docker container on your own servers. All provider API calls use credentials you control, with per-client isolation for SCM connections, AI profiles, and review behavior.

Info

No CI pipeline configuration required. Configure provider connections, scopes, webhooks, knowledge sources, AI profiles, and reviewer identity from the Admin Console, then let ProPR handle discovery and review orchestration in the background.

What Changed In The Latest Release

ProPR now supports Azure DevOps, GitHub, GitLab, and Forgejo-family SCM providers through a shared provider-connection model. Teams can authenticate per client, define provider scopes explicitly, resolve reviewer identity per provider, and activate review flows through crawlers or provider webhooks instead of relying on a single integration path.

AI connections are now configured as provider-neutral profiles. Each client can define Azure OpenAI, OpenAI, or LiteLLM connections, verify them independently, discover models, and bind specific runtimes to review, memory reconsideration, and embedding workloads without redeploying ProPR.

Resolved review threads and admin dismissals are stored as semantic memory records. On later reviews, ProPR can retrieve that context, reconsider similar findings, and suppress duplicates instead of rephrasing the same comment every time a pull request is updated.

The new PR Review view aggregates jobs, token totals, memory contributions, and model-tier usage for a pull request. Combined with Job Protocol execution traces, teams can move from the overall review story to the exact file-level evidence behind a finding.

Reviewer usage dashboards track model traffic over time, and ProCursor source usage reveals which knowledge bases consumed tokens, cost, and event counts. Teams can now inspect both review execution and knowledge retrieval as one operational story instead of guessing where spend accumulated.

ProCursor registers repository and wiki sources, tracks freshness by source and branch, and exposes safe recent-event inspection for indexing and retrieval activity. That makes it easier to trust knowledge-assisted reviews because admins can see which source was used, when it changed, and how much model traffic it generated.

Client-managed secrets are protected before storage, embedding behavior is configured per client, and legacy global fallbacks are removed. That gives each client a clearer configuration boundary for AI connections, embeddings, and review behavior.

Designed For Signal, Not Noise

The new capabilities build on the same core behavior that makes ProPR practical in production:

- Reviews across Azure DevOps, GitHub, GitLab, and Forgejo-family hosts

- Webhook automation plus background discovery for review intake

- Azure OpenAI, OpenAI, and LiteLLM profiles with purpose-bound model selection

- Diff-first review context with on-demand file expansion

- Threaded comments anchored to exact files and lines

- Review and knowledge token reporting for governance and cost tracking

- Source freshness plus safe recent-event logs for ProCursor knowledge bases

- Prompt overrides and reviewer guidance without redeploying

- Multi-client isolation for agencies and platform teams

- Self-hosted deployment so your code stays in your environment

Warning

Upgrading an existing deployment? Apply the included database migrations, persist the ASP.NET Core Data Protection key ring, set MEISTER_PUBLIC_BASE_URL if webhook listeners sit behind a reverse proxy, and verify that each client has both provider connections and AI purpose bindings configured before enabling automation in production.